There is a very handy Moodle plugin called peer work, which you can use to allow students doing group work to assess each other’s contributions and scale the group marks accordingly. In a document authored by Dr Sean Williams, he notes that benefits for students include “a greater engagement with critical thinking; greater awareness of marking criteria; more perceived fairness in assessment”. This plugin saves a lot of paperwork and spreadsheet wrangling.

The upsides of this plugin are clear, but unfortunately, the documentation for it is sometimes less clear, alas. Although, it does cover some aspects of it’s use very well. This blog post is meant to cover some gaps.

Groups

As this plugin is for group work, you’ll need to create groups on your moodle, which is well-documented.

Using Likert scales

In this plugin, students are asked to rate each other’s skill and/or participation according to a Likert scale. Dr. Williams suggests this should be 0-5. You will first need to create a scale, which has a good moodle documentation page. Open your course in moodle. In the left hand column, click grades. Under grades, click scales. Under scales, at the bottom, click “the “Add a new scale” button. You will have to provide a name for their scale and commas separated list of scale values from low to high. The values I have used are: No contribution, Minimal Contribution, Reasonable contribution, Very good contribution, Excellent Contribution. The name I chose for this scale is “Peer Assessment Scale.”

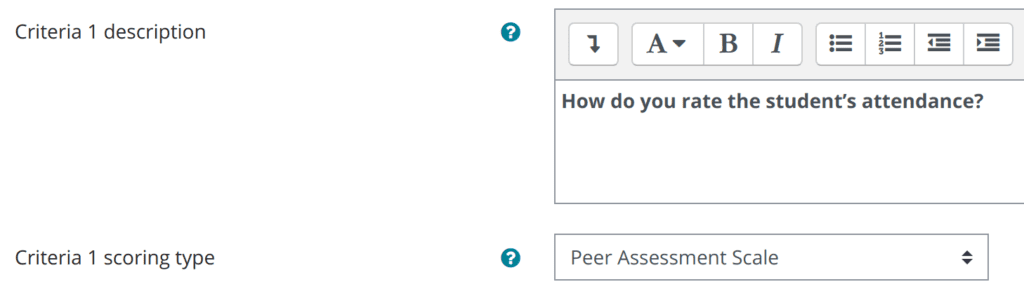

When you create a new Peer Work item on the moodle page, it should look familiar to regular moodle users. The questions for peer evaluation go in the “Assesment Criteria Settings” section of the settings. You will set several criteria, eg “How was this student’s attendance at group meetings?” Each criteria has a description and a “scoring type”. For the scoring type, pick the scale that you made.

Students should see your Likert scale for the question. You must set the scale per question. You can use the one scale you made, or if you feel it’s not a good fit for the question, you can make custom scales for each item. For this criteria, I could have a specific scale that started with: Never attended, Attended Rarely, … etc.

Calculator Settings

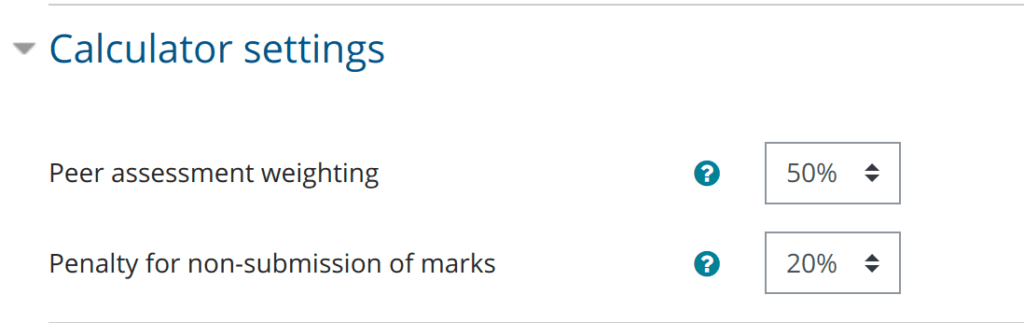

When you are setting up the plugin, you’ll need to set the calculator setting. This sets how much the students marks are effected by their peer evaluations. Dr Williams says, “By default this is set to 50%, meaning that 50% of the group mark is given directly, and 50% is subject to the multiplier. If this gives a spread of marks judged to be too narrow, then it can be adjusted. Normally this shouldn’t be more than 60% or less than 40%.”

There’s also a penalty for non-submission, where they get dinged if they fail to evaluate each other. The default is 20%. Dr. Williams suggests students should be told this early.

How this multiplier works is explained in a PDF buried in the plugin’s source code which is a copy of this web page. The student’s peer scores are used to calculate a multiplier for the group grade. This is done so that nobody is penalised if a peer’s marks are missing, skew low or skew high.

Each student gets a percentage mark – the multiplier. If the peer weighting is 50% and you’ve given them a score of 100, they get 50 marks from the group grade (as in 50% is directly the group grade) and the other half of the mark is calculated by multiplying it by their personal percentage mark. So if the student Bob gets an 80 for his personal percentage mark, his overall mark is 50 + (50 * 0.8) = 90.

The percentage mark can exceed 100% in some cases. If Alice’s group has gotten a 90, and Alice has gotten a percentage of 115, her score would be 45 + (45 * 1.15) = 96.75.

Student marks are capped at 100.

Happy birthday, Les. I just got an email from my older brother who I haven’t talked to in 20 years. Bit of a mess right now. Enjoy the day, god knows you’re not on this side of the pond.